|

|

|

|

|

|

| Research at Helsinki University of Technology is carried out by the Department of Media Technology. Key research topics include optical tracking and 3D user interfaces for modeling and animation. The goal is to develop new intuitive and natural bimanual interfaces for creative tasks. Accessibility of the results is also considered, we are aiming at developing solutions that can easily be taken into a use outside the laboratory and run on commodity hardware. |

|

|

| Optical Tracking |

|

|

|

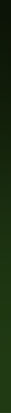

For our planned user interface we need to track user hand and finger movements, as well as head for immersive rendering. We have built premilinary optical tracking, which relies on infra-red detecting cameras, inra-red LEDs, and retroreflective 2d markers. Our tracking supports multiple cameras, employs Kalman filtering, works over a network of computers, and we are constantly improving it.

Our goal is to have a robust, fast, accurate, unobtrusive, stabile, and affordable two-handed interaction tool. Affordable here means that the tracker should be composed of cheap off-the-self components, ideally allowing anyone to build their own system and use our software.

Hand tracking is already implemented, while head and finger-tracking is in the works. For finger- tracking, initial research has been done to the extent where we are now starting with the implementation. With the finger-tracking we plan to use Bayesian modeling with a particle filter. Inertial tracking was also considered as additional tracking enhancement, but after a literacy review and experimenting we gave up on the idea. |

|

|

| |

|

| Bimanual 3D User Interfaces for Modeling |

|

|

Traditionally 3D modeling has been done using two dimensional user interface build on keyboard, mouse and monitor setup. In HandsOn project we are designing and implementing novel 3D interfaces that allow designers to shape virtual objects in fully three dimensional work space.

One part of our research is to test stereoscopic and immersive rendering setups that can create an illusion for the user of being present in the same space with the designed virtual objects. Second part is the development of new interaction models that allow users to work on 3D object directly with their hands, i.e. using orientation, position and gestures of both hands as an input. The goal is to create similar intuitive and natural work experience as can be experienced when working with physical medium such as clump of clay or stack of Lego blocks or even sketching with a pencil on a piece of paper. Especially better support for creative drafting phase of design and overall design of organic shapes is pursued.

Third part of the 3D modeling user interface research is user testing of the created 3D user interface prototypes. The user testing is aiming at evaluating the usability of the novel 3D user interfaces. We also aim at gaining better understanding of the factors affecting the use experience and the benefits that 3D user interface can offer through the user testing. |

|

| |

|

| Animation in 3D Virtual Environments |

|

|

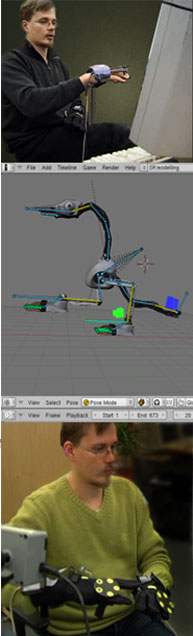

The current practices in the field of animation concentrate on two interface choices. There is the desktop computer application interface, which offers very little possibilities for modeling with natural movements. Editing is done purely with 2D interactions with mouse and keyboard, and real- time animating is often not viable. Completely at the other end is the practice of motion capture, where motion from a real-world actor is mapped to an animated character. The real-world actor is traced by putting markers to different parts of body, and the movement of markers can be quite easily transformed to the movement of a character, by using skeleton models and inverse kinematics determining the movement of virtual bones when the movements of bone endpoints is known. Between these two, there has been few implementations. The goal of the HandsOn animation research is to study the area between full-body motion tracking and desktop interface. The initial idea has been that the animation could be done with different hand gestures in 3D virtual environment.

The approach chosen was to take an existing modeling and animation software, Blender, and extend it so that 3D interaction would be possible. There are several animation techniques studied. The first is virtual puppet animation style with key frames, where the user can move the skeleton model of an animated character. The poses of the character are stored as key frames, and the animation software takes care of the interpolation between frames. The possibility of building the animation directly in 3D this way might well be more natural to use.

Another interesting technique is the animation with partial motion capture, so that only a part of the movements of the character are modeled with real-time motion capture, when other parts are modeled with key frame-based techniques. An example might be a character placed with traditional methods to a pose and then it's hands grabbed by the animator in virtual reality and then mapping the animator's hand movements to the movements of the character's hands. |

|

| |

|

|

|